Summary. Accurately identifying IoT devices is a difficult problem. We walk through some of the successes and failures.

(You can find Part 1 of this seris at this link.)

Problem 2: How to identify IoT devices?

Main problem. Let’s say you’re using IoT Inspector for the first time. You have dozens of smart devices. You scan your home network with IoT Inspector. You see a list of IP addresses and MAC addresses.

You don’t know which IP/MAC address is your device of interest. IP/MAC addresses aren’t readable to you. How do you know which device to inspect if you don’t know what it is in the first place?

Method 1: Ask other people (i.e., crowdsource)

IoT Inspector currently gives each user an option to label their devices. Below is a screenshot of me running IoT Inspector, where I am asked to optionally enter the name and manufacturer of this device.

Since we have a dataset of some users labeling some of their devices, it should be relatively easy to build a machine learning model to automatically identify devices for other users, right?

Not quite. There are a few challenges.

Challenge 1: Not everyone labels their devices. As we discovered in our 2020 paper, fewer than 25% of the monitored devices were labeled by users, and less than 50% of the users labeled at least one of their devices.

Challenge 2: Some labels are inconsistent. First, a user could mis-spell certain words in the labels; in our dataset (as of the middle of 2022), for example, 292 devices are labeled with the vendor name “philips” (correct spelling), but 85 devices are labeled as “phillips” and 7 devices as “philip’s.”

Another source of inconsistencies comes from multiple ways of naming the same type of devices. For example, Google Home has multiple models, such as Google Home and Google Home Mini, which users could consider as either a smart speaker or voice assistant. Also, an Amazon Echo could be labeled as “Amazon Echo”, or “Amazon Alex”.

Similarly, a vendor could also be labeled in different ways. For example, Ring, a surveillance camera vendor, was acquired by Amazon, so Ring devices are actually Amazon devices. Also, we have seen instances of Logitech Harmony Remote Controllers labeled as both “logitech” and “logitech harmony” as the vendor, while in reality the vendor should be “logitech” alone.

Current fix: Hand-written rules. I won’t bore you with the details here. You can find out more in Section 5.1 of our paper. This is not the best solution, and we’re exploring automated methods to reduce the number of hand-written rules.

Method 2: Ask the device itself

In some cases, you can ask a device for who it is. Many devices use protocols such as mDNS and UPnP to announce their own identities. In many cases, since these are self-announcements, they are very accurate — except that not many devices support such protocols.

In an older version of our paper, we find that mDNS and UPnP announcements are highly accurate in identifying devices.

Experiment setup. To illustrate, we obtain the following information from each device:

-

- FingerBank, a proprietary API that takes the OUI of a device, user agent string (if any), and five domains contacted by the device; it returns a device’s likely name (e.g., “Google Home” or “Generic IoT”).

- Netdisco, an open-source library that scans for smart home devices on the local network using SSDP, mDNS, and UPnP. The library parses any subsequent responses into JSON strings. These strings may include a device’s self-advertised name (e.g., “google_home”).

- Hostname from DHCP Request packets. A device being monitored by IoT Inspector may periodically renew its DHCP lease; the DHCP Request packet, if captured by IoT Inspector, may contain the hostname of the device (e.g., “chromecast”).

- HTTP User Agent (UA) string. IoT Inspector attempts to extract the UA from every unencrypted HTTP connection. If, for instance, the UA contains the string “tizen,” it is likely that the device is a Samsung Smart TV.

- OUI, extracted from the first three octets of a device’s MAC address. We translate OUIs into names of manufacturers based on the IEEE OUI database. We use OUI to validate device vendor labels only and not device category labels.

- Domains: a random sample of five registered domains that a device has ever contacted, based on the traffic collected by IoT Inspector. If one of the domains appears to be operated by the device’s vendor (e.g., by checking the websites associated with the domains), we consider the device to be validated.

Method. Our goal is to validate a device’s standardized category and vendor labels using each of the six methods above. However, this process is difficult to fully automate. In particular, FingerBank’s and Netdisco’s outputs, as well as the DHCP hostnames and UAs strings, have a large number of variations; it would be a significant engineering challenge to develop regular expressions to recognize these data and validate against our labels. Furthermore, the validation process often requires expert knowledge. For instance, if a device communicates with xbcs.net, we can validate the device’s “Belkin” vendor label from our experience with Belkin products in our lab. However, doing such per-vendor manual inference at scale would be difficult. Given these challenges, we randomly sample at most 50 devices from each category (except “computers” and “others”). For every device, we manually validate the category and vendor labels using each of the six methods (except for OUI and domains, which we only use to validate vendor labels). This random audit approximates the accuracy of the standardized labels without requiring manual validation of all 8,131 labeled devices.

For each validation method, we record the outcome for each device as follows:

-

- No Data. The data source for a particular validation method is missing. For instance, some devices do not respond to SSDP, mDNS, or UPnP, so Netdisco would not be applicable. In another example, a user may not have run IoT Inspector long enough to capture DHCP Request packets, so using DHCP hostnames would not be applicable.

- Validated. We successfully validated the category and vendor labels using one of the six methods — except for OUI and domains, which we only use to validate vendor labels.

- Not Validated. The category and/or vendor labels are inconsistent with the validation information because, for instance, the user may have made a mistake when entering the data and/or the information from the validation methods is wrong. Unfortunately, we do not have a way to distinguish these two reasons, especially when the ground truth device identity is absent. As such, “Not Validated” does not necessarily mean that the user labels are wrong.

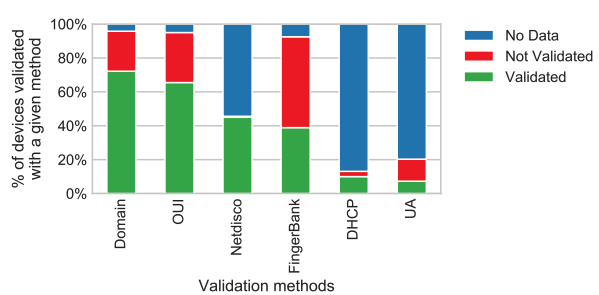

Results of validation. In total, we manually sample 522 devices from our dataset: 22 devices in the “car” category (because there are only 22 devices in the “car” category) and 50 devices in each of the remaining 10 categories. The chart below shows the percentage of devices whose category or vendor labels we have manually validated using each of the six validation methods.

Percentage of the 522 sampled devices whose vendor or category labels we can manually validate using each of the six validation methods.

Trade-off in accuracy vs availability. As you can see, there are trade-offs between the availability of a validation method and its effectiveness. For example, the Netdisco method is available on fewer devices than the Domain method, but Netdisco is able to validate more devices. We can validate 72.2% of the sampled devices using Domains but only 45.0% of the sampled devices using Netdisco.

One reason for this difference is that only 4.2% of the sampled devices do not have the domain data available, whereas 54.4% of the sampled devices did not respond to our Netdisco probes and thus lack Netdisco data. If we ignore devices that have neither domain nor Netdisco data, 75.4% of the remaining devices can be validated with domains, and 98.7% can be validated with Netdisco.

These results suggest that although Netdisco data is less prevalent than domain data, Netdisco is more effective for validating device labels.

What about domains? Despite their availability now, domain samples may not be the most prevalent data source for device identity validation in the near future, because domain names will likely be encrypted:

-

- DNS over HTTPS or over TLS is gaining popularity, making it difficult for an on-path observer to record the domains contacted by a device.

- Moreover, the SNI field — which includes the domain name — in TLS Client Hello messages may be encrypted in the future.

These technological trends will likely require future device identification techniques to be less reliant on domain information. .